Member-only story

The concepts of Artificial Intelligence (AI), Machine Learning (ML), and Deep Learning (DL) are essential to understand for every business executive and manager. The disrupting and evolving AI technology is changing every business today and many more in the near future. Here is a brief introduction to the essences you must know to better harness its power for your business and direct its usage within your organization.

The post is based on part of “Data and AI Ideation Workshop”, we (Aiola) deliver for many traditional companies to bootstrap significant AI transformations and improve business thinking about integrating AI.

AI is not new

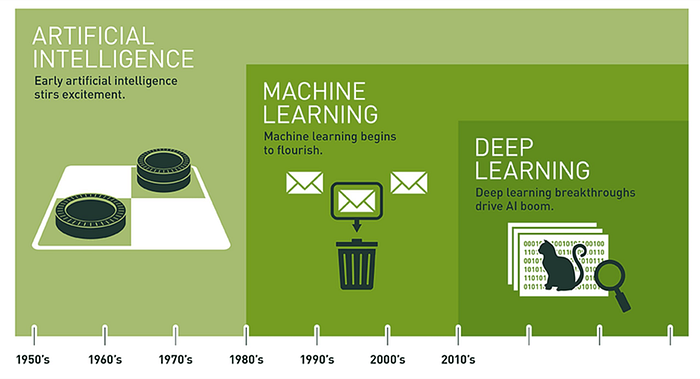

Since the 1950's, the efforts to use the newly invented mechanical computation technology to perform more complex tasks than arithmetic calculation pushed research, engineering, and businesses to try and realize the dream of artificial intelligence.

The dream didn’t start then.

“Any sufficiently advanced technology is indistinguishable from magic.” — The third law of Arthur C. Clarke

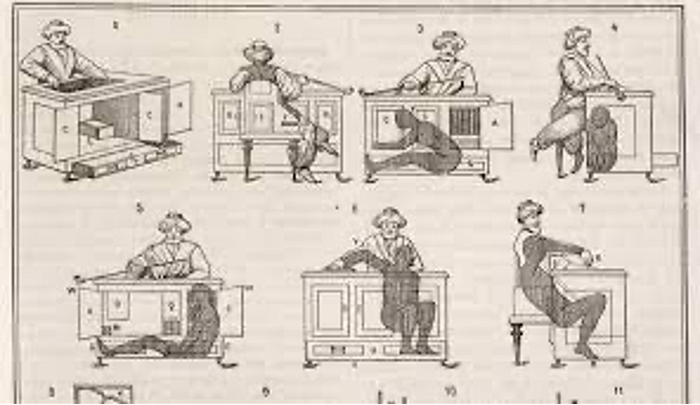

The Mechanical Turk or Automaton Chess Player was a fake chess-playing machine constructed in the late 18th century. From 1770 until its destruction by fire in 1854, various owners exhibited it as an automaton, though it was eventually revealed to be an elaborate hoax. In fact, the Turk was a mechanical illusion that allowed a human chess master hiding inside to operate the machine. With a skilled operator, the Turk won most of the games played during its demonstrations around Europe and the Americas for nearly 84 years, playing and defeating many challengers, including statesmen such as Napoleon Bonaparte and Benjamin Franklin.

The technology back in the 18th century could not allow a machine to play Chess successfully and not beat the world champion. We need to wait more than two hundred years to make it happened.